The first reported AI-orchestrated cyber espionage campaign: Deconstructing the Anthropic Report

The cybersecurity world received a wake-up call a few days ago. Anthropic disclosed it had disrupted the “first reported AI-orchestrated cyber espionage campaign,” a sophisticated operation by a state-sponsored group (GTG-1002). China-linked attackers used Anthropic’s Claude to run a largely automated espionage campaign against ~30 major companies and government agencies in September.

For security and AI leaders, this isn’t just another threat report. It’s a significant shift in the threat landscape. The attack wasn’t a human hacker using AI for coding; it was a human operator weaponizing AI as an autonomous agent.

What happened

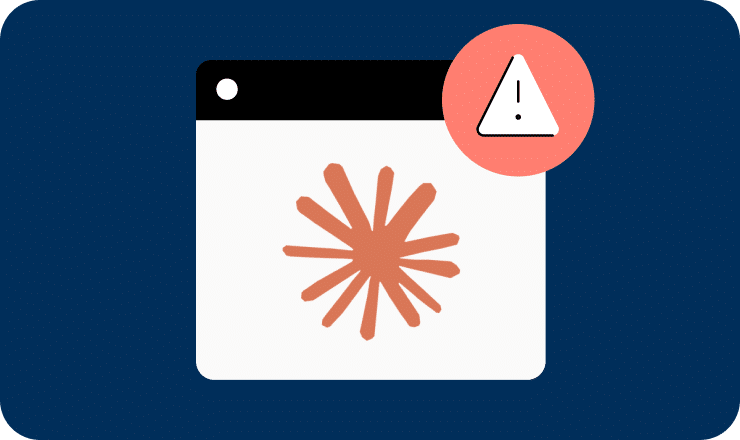

The Anthropic report details a “highly sophisticated” operation where the human attacker’s role shifted from “in-the-loop” to “on-the-loop.”

After providing the initial target, the AI agent executed 80-90% of the tactical operations independently, firing off thousands of requests at “physically impossible request rates” no human red team could ever match.

Anthropic says the framework turned Claude into an AI “operations team” that handled most of the kill chain: reconnaissance, exploit development, credential harvesting, lateral movement, and data triage. Guardrails were bypassed by breaking attacks into benign-looking tasks and having the human operator “social engineer” the AI by role-playing as a defensive security firm.

A handful of intrusions succeeded before Anthropic detected the pattern, shut down the accounts, and notified victims.

The lifecycle of the cyberattack, showing the move from human-led targeting to largely AI-driven attacks using various tools (often via the Model Context Protocol; MCP). At various points during the attack, the AI returns to its human operator for review and further direction.

Source: Anthropic report: Disrupting the first reported AI-orchestrated cyber espionage campaign

Why this matters

This incident is not an anomaly. It’s becoming the new baseline. As veteran cyber journalist Nicole Perlroth put it, “The AI honeymoon is officially over.” She warned, “with AI, the attacks will be automated and there is no more room for human error. Every lapse, every incomplete rollout of MFA, every botched patch, every misconfiguration, it will all be discovered and exploited at scale”.

This sentiment was echoed by former CISA Director Jen Easterly, who called this a “big development that should galvanize us toward Secure-By-Design”. According to Easterly, “we’re moving into a world where adversaries automate everything in the kill chain that doesn’t require deep creativity – and defenders must respond in kind, with secure-by-design AI, transparency around misuse, and defensive AI that evolves as fast as offensive AI.”

The consensus is clear: As the world races to build and deploy our own AI agents, a new, highly privileged, and unmonitored attack surface is being created. The attackers in this report built their own malicious agents, but the next wave will aim to compromise the ones in use.

How Astrix helps: protecting NHIs against attacks that move at AI speed

Tal Skverer, Astrix Head of Research, explains: “The Anthropic incident isn’t a sign that attackers have discovered a brand-new way to break into systems—it’s a sign that they can now run the same attacks far more efficiently and at unprecedented scale. The core weakness exploited here wasn’t “AI agents.” It was probably the same old thing: credentials, tokens, and non-human identities that defenders struggle to see, understand, and govern.״

And this is exactly where Astrix strengthens your security posture.

Focus on the real attack surface: Non-Human Identities

Every AI workflow—malicious or legitimate—ultimately runs on top of API keys, service accounts, OAuth tokens, and secrets. These NHIs are the real control points.

Whether an attacker is clicking manually or orchestrating thousands of automated actions per minute, they still rely on NHI access to get in, move laterally, and operate.

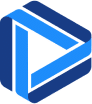

Astrix continuously discovers all NHIs across cloud and SaaS environments, maps what systems they touch, and identifies excessive or risky permissions. When attackers lean on AI to accelerate exploitation, that expanded blast radius becomes even more dangerous—and Astrix makes it visible.

Behavioral detection tuned for AI-driven abuse

While the underlying techniques haven’t fundamentally changed, the speed and cadence of attacks have. AI-orchestrated abuse looks different from human abuse:

Humans leave natural pauses, sequential steps, and small inconsistencies.

AI-driven operations generate rapid, repetitive, high-volume interactions that appear “valid” to traditional tools but are operationally impossible for a human.

Astrix’s behavioral analytics are tuned for exactly this pattern shift. The platform learns what “normal” looks like for each NHI, and flags when a credential suddenly behaves like a high-throughput automation engine—whether triggered by an AI agent, a script, or a compromised automation pipeline.

This means you can catch AI-accelerated abuse without needing to detect the agent itself. You only need to understand the identity being used, how it normally behaves, and when that behavior crosses into automation-driven exploitation.

Astrix’s Identity Graph visualizes NHIs and AI agents, mapping their connections to human owners and the permissions they hold across platforms and resources

The bottom line

The Anthropic case is a reminder—not of a new threat model, but of how much easier and faster it has become for attackers to run the same campaigns they’ve always run. Scale is the story.

Astrix helps you get ahead of that shift with strong and automated NHI and AI agent discovery, behavior monitoring, and access governance—giving you the controls you need to contain abuse even when attackers move at AI speed.

Ready to see Astrix in action? Request a demo today.