What is in for me?

AI systems are undergoing a structural shift. The first wave of enterprise adoption focused on relatively bounded use cases: assistants that answered questions, copilots that suggested code, and chatbots that sat on top of static data. The next wave is agentic. Instead of merely predicting the next token, AI agents increasingly take actions: they call tools and APIs, orchestrate workflows, move money, change configurations, and trigger operations on behalf of humans and other systems.

As soon as AI stops being “just a model” and starts behaving as an operational actor, a familiar security problem emerges in a novel form: how do you see, control, and protect something that can think, plan, and act across your digital estate?

This is the core of AI Agent Security.

Defining AI Agent Security – And Why It Matters Now

What is AI Agent Security?

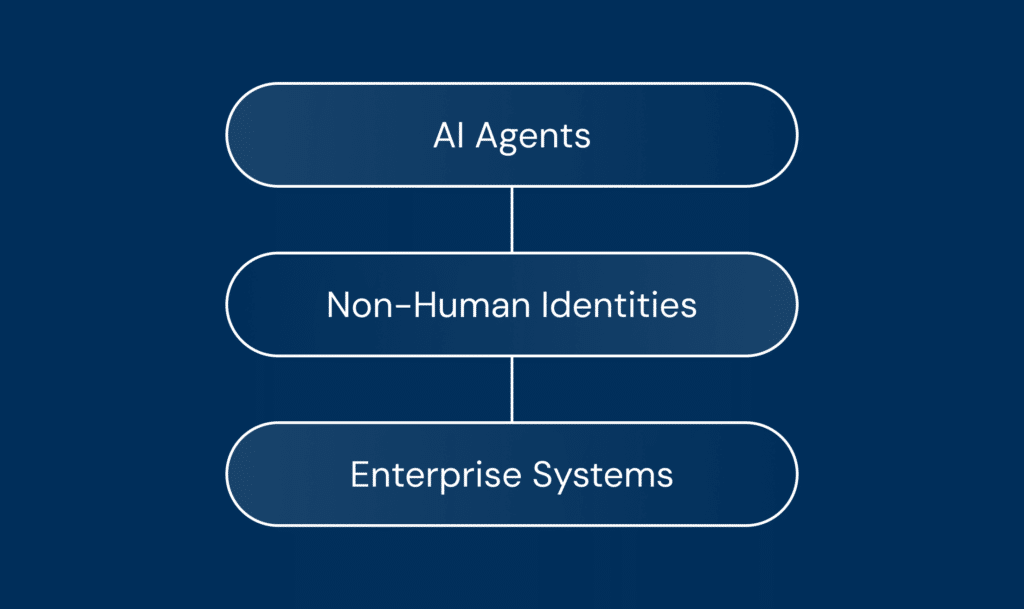

AI Agent Security is the set of practices, controls, and technologies that:

- Discover and inventory AI agents and their supporting identity layers (e.g., MCP servers, non-human identity layers).

- Assess and manage agents’ security posture across configuration, permissions, and integrations.

- Govern agent access and behavior across tools, APIs, data, and workflows.

- Detect, investigate, and respond to threats emerging from or targeting agents.

- Enforce guardrails and control policies at runtime, so agents operate within safe and intended bounds.

- Help practitioners design, deploy, and maintain secure-by-design agentic systems.

In other words, AI Agent Security is to agents what cloud security became to cloud infrastructure and what identity security became to human and machine accounts: a dedicated discipline that recognizes agents as first-class security subjects.

Why it is a growing priority

The urgency comes from converging trends:

- Shift from insight to action: early LLM deployments primarily generated content or recommendations. Agentic systems, by design, take actions (refunds, CRM updates, code changes, ticket escalation, payment initiation, configuration updates). Each action surface becomes a potential abuse or error surface.

- Explosion of agent footprints: organizations are building domain-specific agents (sales ops, finance, support) and adopting embedded agents inside SaaS products. As in the early cloud era, few enterprises maintain an accurate, up-to-date inventory of which agents exist, what they can do, and where they run.

- Novel, hybrid threat models: agents blend model-centric risks (prompt injection, data leakage), application risks (logic flaws, insecure tool integrations), identity and access risks (over-privileged agent identities), and operational risks (shadow agents, brittle guardrails). Traditional application security or IAM alone does not cover this blend.

The net effect is that many organizations are moving from ‘pilot and pray’ to ‘production with provable controls.’ Security and governance are becoming the gating factors for scaling agents responsibly.

What Practitioners Actually Want From AI Agent Security

Amid the noise, practitioners tend to express a consistent set of needs:

- “What agents do we have and what can they do?”

- “How do we prevent them from doing something harmful, wrong, or non-compliant?”

- “How will we know if something goes wrong, and how do we respond?”

- “How do we embed this into our existing security and governance stack without blocking innovation?”

These map to four core value propositions that practitioners expect from AI Agent Security:

- Visibility and understanding: An accurate, dynamic picture of agents (internal and third-party), their privileges, tools, and data access.

- Control and policy enforcement: Fine-grained, auditable constraints on what agents can do, per tool, per workflow, and per context.

- Assurance and trustworthiness: Evidence that behavior aligns with policy and regulatory requirements, with traceability of who (or what) did what, when, and why.

- Operational efficiency, not just risk reduction: Controls that scale with adoption, accelerate safe deployment, and integrate into existing Security Operations Center (SOC) and Identity and Access Management (IAM) systems.

In short, the value expectation enables agentic innovation at scale without losing control, helping security teams say “yes, but safely.”

Key Pillars of AI Agent Security

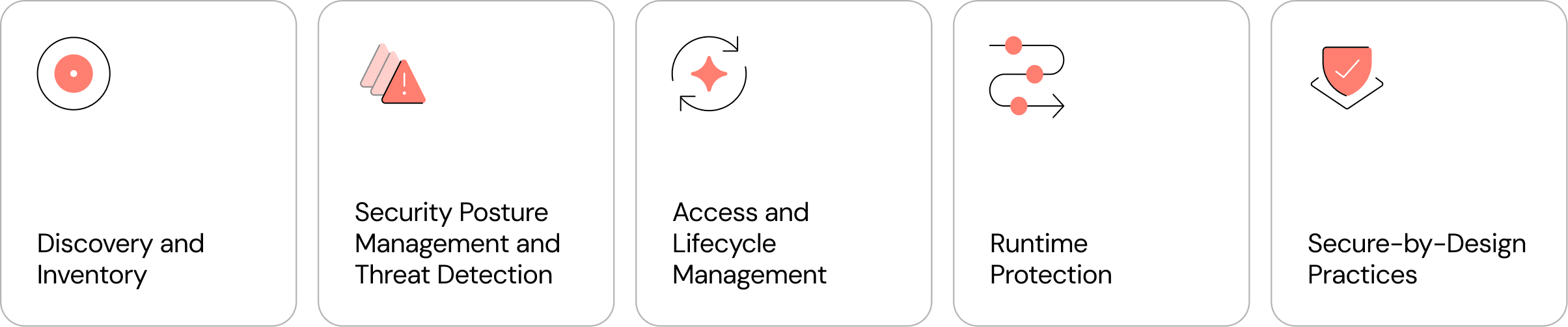

A comprehensive AI Agent Security approach can be understood as five pillars:

Discovery and inventory of AI agents and identity layers

The first pillar is deceptively simple: know what exists. Effective discovery extends beyond the model into orchestration, tooling, and identity layers.

- Internal agents built on in-house frameworks or orchestrators.

- Embedded agents inside SaaS tools and third-party products (e.g., CRM copilots, support agents, code assistants).

- Shadow agents created by teams without central oversight.

- Multi-agent systems where agents delegate to other agents.

- Tool and integration surfaces, including MCP servers or analogous tool servers that expose enterprise systems as agent-callable tools.

- Non-human identities (bots, API keys, service accounts, OAuth tokens, secrets) that agents use to authenticate and act.

A mature discovery capability answers continuously: which agents exist, where they run, what tools and data they can reach, under what identities they operate, and who owns them.

Security posture management and agentic threat detection

Once agents are visible, the next task is to assess posture and monitor for threats.

Posture management for AI agents

Agent posture management extends familiar concepts from cloud security posture management (CSPM) to the agent world, focusing on configuration and policy hygiene, blast radius, and guardrail coverage.

Examples of posture questions include: Are agents restricted to least-privilege tool sets? Are MCP servers exposing only necessary endpoints? Are prompts and policies aligned with organizational guidelines? Does approval gate high-risk operations? Is there adequate isolation between agents?

Agentic threat detection and response

Threat detection in agentic environments must consider both external adversaries and unintended internal behavior. Typical risks include prompt injection and tool manipulation, data exfiltration or lateral movement via tools, abuse of over-privileged agents, and model-driven logic errors (plausible but wrong actions).

Agent-aware detection looks beyond raw logs to semantic context: what was requested, what intermediate steps were taken, which tools were invoked with which parameters, and whether behavior deviated from policy or baseline.

Response mechanisms include halting or sandboxing an agent or tool call, inserting human-in-the-loop approvals for high-risk operations, triggering SOAR playbooks, and providing forensics that reconstruct the agent’s actions and decision path.

Access and lifecycle management

AI agents are new identities with their own lifecycle. Treating them as such is core to security.

Identity, access, and delegation

Key questions include authentication (long-lived secrets vs. ephemeral tokens vs. identity-aware proxies), authorization (least privilege at the agent and tool layers), and delegation semantics (when acting on behalf of a user, how identity and responsibility are preserved).

In many environments, non-human identity layers are the connective tissue: they represent agent identities, broker delegated access, and enable rotating, revoking, and scoping credentials at scale.

Lifecycle hygiene

Lifecycle hygiene is often overlooked but critical:

- Creation and onboarding: standardized agent creation with an owner, risk classification, and allowed tool set at birth.

- Change management: tracking and approving changes to prompts, tools, and policies; gating high-risk changes by review.

- Decommissioning: revoking credentials and cleaning up associated tool endpoints and secrets when an agent is retired.

Runtime protection: guardrails and access control in motion

If discovery and posture management are about static state, runtime protection is about dynamic behavior.

Runtime controls typically sit at the orchestration layer to intercept and inspect tool calls before execution, apply policies that modify/block/approve/log actions, enforce quotas and boundary conditions, and provide step-wise oversight across multi-step plans.

Defense-in-depth matters: combine model-level safeguards with tool-level policies and environmental controls so that bypassing any single layer does not grant unbounded freedom.

Secure-by-design practices

The most sustainable form of AI Agent Security is secure-by-design: building in defensibility from the design, testing, and deployment of agents.

Secure-by-design usually includes agent-specific threat modeling, reusable patterns and blueprints for safe tool integration and approvals, automated testing (including red-team style scenarios), and developer-facing documentation that makes the secure path the easiest path.