What is in for me?

Gartner named agentic AI a top cybersecurity trend for 2026, warning that most organisations will face security incidents caused by AI agents before any controls are in place. In most enterprises, this is already happening.

Agents are being deployed across production environments faster than security teams can track them, each carrying its own OAuth grants, API tokens, and service account credentials. No one inventories them, no one rotates them, and no one owns them.

This creates something worse than shadow IT: an expanding layer of over-scoped permissions, unrotated keys, and service-to-service trust relationships that no IAM review cycle touches. Every new agent deployment widens the ungoverned NHI attack surface.

The Security Risk Is in What Agents Do

Unlike chatbots, agents execute multi-step workflows autonomously. A single orchestrated chain might read a support ticket, query a customer database for context, draft a response, check inventory levels through an ERP API, and send an email, all without human intervention. This is why Gartner emphasizes that the security focus must shift from filtering prompts to governing autonomous actions.

Tool-calling frameworks like MCP make this even more fluid, allowing agents to dynamically discover and invoke external services mid-execution. When an agent is compromised or simply misconfigured, that access footprint becomes the blast radius. Any attacker who then gains control of one step inherits the permissions of every system the agent can reach, propagating laterally through the pipeline before anyone notices.

The OWASP Agentic AI Top 10 identifies excessive agency as the root enabler: agents provisioned with far more access than they actually need, with no mechanism to review or reduce it over time.

Identity at Scale, Governance at Zero

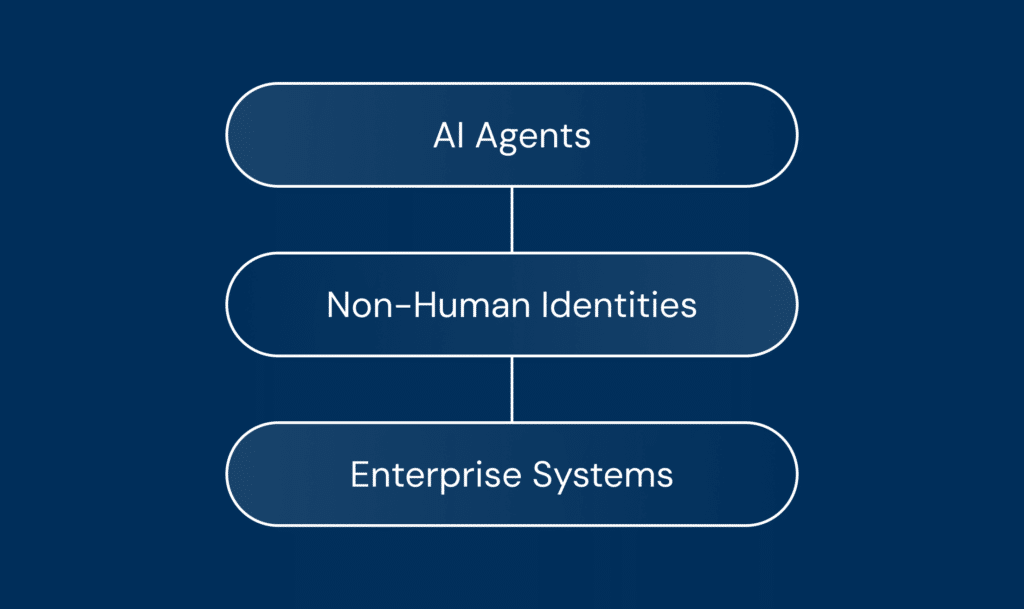

Almost every agent deployment generates a cluster of NHIs: service accounts, API keys, OAuth tokens, PATs, secrets, and MCP credentials. These define what the agent can reach, what attackers go after, and what stays active long after the agent itself is decommissioned.

Astrix’s research shows the NHI-to-human ratio at 100:1 across enterprise environments, and most of those credentials live entirely outside standard IAM review cycles. No clear owner, static long-lived secrets, permissions set at deployment, and never revisited.

As Francis Odum notes in his research on emerging agentic identity access platforms, AI agents are quickly becoming operational identities in their own right. The problem is that most enterprises are still managing them with IAM models designed for humans and basic service accounts.

When agents are granted autonomy without a corresponding governance layer, identity sprawl becomes inevitable. It’s also why Odum described NHI security as having “the makings to be one of the next big things across security.”

Each type of NHI carries its own risk properties, and understanding those differences is the foundation of governing the credential lifecycle:

To see how this plays out in practice, consider a developer who connects an agent to a service account with admin-level access because it was the fastest way to get it working. Months later, that developer moves to another team. Nobody reviews the permissions, nobody updates ownership, and when the agent is eventually deprecated, the service account stays right where it is: still live, still credentialed, invisible to any IAM review cycle.

Multiply this across dozens of agents, and the result is secret sprawl and privilege creep, quietly expanding the NHI attack surface with every new integration.

Recent Incidents Show What’s at Stake

In November 2025, ShinyHunters compromised OAuth tokens from a Gainsight integration and moved laterally across more than 200 customer Salesforce environments. The credentials were valid, the permissions were real, and nobody could see how far the access had spread until it was too late.

A month later, researchers found a similar pattern in OpenClaw, an AI agent framework that had reached 135,000 GitHub stars and connected to over 100 services through MCP. More than 42,000 instances were publicly exposed, 93% with authentication bypass vulnerabilities that granted persistent access to Salesforce, GitHub, and Slack.

Separately, browser extensions have exposed the same attack surface from a different angle. In December 2025, two malicious extensions with 900,000 combined installs quietly exfiltrated ChatGPT and DeepSeek conversations, including session tokens and source code.

Why Traditional Security Models Break

What connects these incidents together is that the access behind them was never flagged, because nothing in the traditional security stack was looking for it:

EDR tells you what ran, network monitoring tells you where traffic went, DLP tells you what left the building. But none of them can answer the question that actually determines your exposure: what was the agent authorised to do, and are those credentials still active?

This blind spot reflects a fundamental misalignment in how we approach AI security. As Gartner’s latest research points out, “the primary risk is not what the AI says, but what the AI does.” The focus must shift from prompt injection to agent behavior analysis and identity traceability.

In many AI-related data breaches, the entry point was an NHI, where credentials were left ungoverned with no owner and no expiry date. The pattern points to the same governance gaps across enterprise environments:

The starting point for closing these gaps is building a complete inventory of every agent, NHI, MCP server, and credential to establish a clear baseline. From there, teams can move into remediation: reducing excessive privileges, assigning ownership, and closing hygiene gaps. The final piece is making sure new agents arrive provisioned with least-privilege, short-lived credentials from day one.

That progression is the identity-first approach, and it’s what the Discover–Secure–Deploy framework codifies. MCP sits at the centre of all of it as the protocol connecting agents to tools, data, and each other. The next chapter looks at how it works and where it breaks down.