NHI Governance for AI Agent Security in the Age of ChatGPT-5

Tools like OpenAI ChatGPT and Microsoft Co-Pilot are no longer just assistants—they’re autonomous AI agents that execute tasks, connect to corporate systems, and augment human workflows.

With the release of GPT-5 on August 7, 2025, creating these agents has become dramatically easier. Any employee—regardless of technical skill—can now spin up a powerful, customized AI agent in minutes. This lowers the barrier to adoption but also significantly increases the risk of unmanaged access to corporate environments, since these AI agents operate through non-human identities (NHIs), requiring privileged access via service accounts, API keys, and other tokens.

When creation is this fast and frictionless, NHIs can proliferate without oversight, leading to misconfigurations, over-permissive access, and potential exposure of sensitive systems.

How Do GPT-5 Features Increase Non-Human Identity and Access Risks?

- Adaptive Reasoning – Smarter agents can pick their own tools, risking over-granted permissions

- 256K Context Window – Processes full policies or datasets, raising stakes if access is misconfigured

- Integrated Tool Use – Direct links to APIs, email, code, and more mean more privileged NHIs in play

The Astrix Security platform for Agentic & Non-Human Identity Security leverages industry-leading NHI monitoring and access management to discover and inventory every AI agent—both managed and shadow—across your environment. We provide deep context into their permissions and real-time activity so you can control access, enforce governance, and stop threats before they escalate.

Our approach directly addresses OWASP Agentic AI threats such as:

- Tool Misuse (T2) – agents using tools in unintended ways

- Privilege Compromise (T3) – elevated access falling into the wrong hands

- Identity Spoofing (T9) – agents pretending to be something they’re not

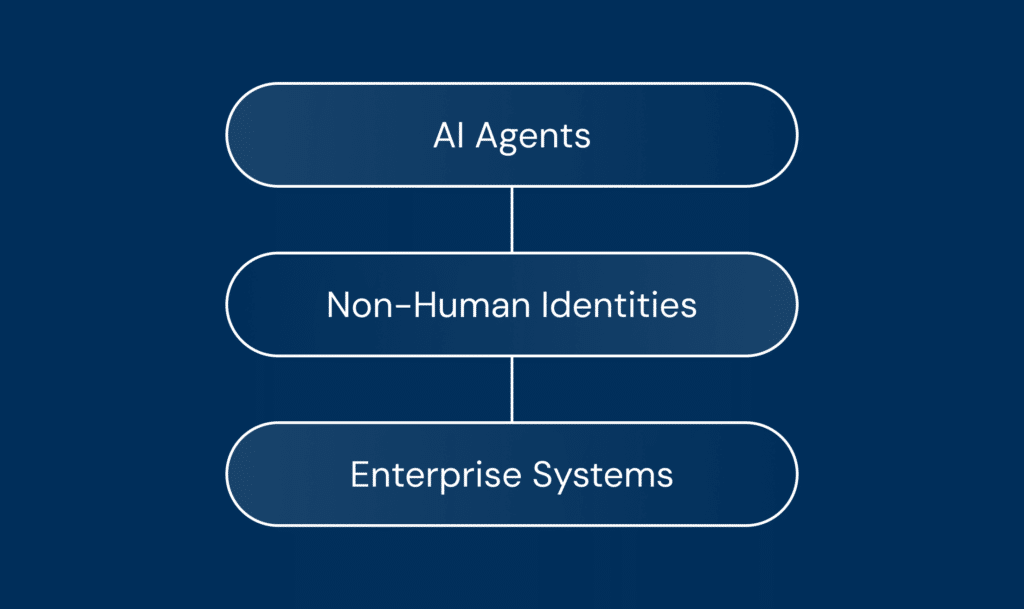

You cannot secure AI Agents without securing NHIs

The OWASP Top 10 LLM Apps & Generative AI makes it clear: “NHIs play a key role in agentic AI security.” Their research also explains why: “Agents often operate under NHIs when interfacing with cloud services, databases, and external tools. Unlike traditional user authentication, NHIs may lack session-based oversight, increasing the risk of privilege misuse or token abuse if not carefully managed.”

This 1-minute demo shows how tightly AI agents like custom ChatGPTs are connected to Non-Human Identities (NHIs) such as API keys.

It illustrates how easily an employee can unintentionally grant sensitive access and misconfigure an agent, leading to policy violations and serious security risks.

How Astrix Protects ChatGPT

1. Discovery & Visibility

- Inventory: Identify every custom and third-party GPT or Co-Pilot, along with its associated NHIs.

- Owner mapping: Link each agent to a responsible human owner for accountability.

- Platform connections: See exactly which platforms—from Google Workspace to Salesforce—agents can access.

- Lineage graph: Visualize relationships between agents, NHIs, permissions, owners, and accessed resources.

2. Governance & Compliance

- Secure credentials: Rotate and protect API keys, tokens, and certificates.

- Approval workflows: Require governance sign-off before agents go live.

- Audit readiness: Maintain a comprehensive audit trail for compliance.

3. Risk Detection & Response

- Least privilege: Restrict agents to the minimal permissions required.

- Continuous monitoring: Detect spoofing, policy violations, or anomalous activity in real time.

From Risk to Reality: What We See in the Field

In our work with customers, we regularly uncover AI agent risks such as:

- Insecure agent connections – Linked to services or platforms using API keys in code, hardcoded credentials, or weak authentication.

- Dormant NHIs – Credentials tied to agents that haven’t been active in months, but still valid.

- Unsafe sharing methods – Resources shared via public links or other insecure channels.

- Overexposed agents – Accessible to every user in the workspace, even without a business need.

- Orphaned agents – Still active but owned by departed employees or inactive accounts.

- Public exposure – Agents unintentionally available outside the organization.

- Privilege escalation – Paths for agents to gain elevated permissions, potentially leading to deep compromise.

In our case study, a global brand used Astrix to uncover 250+ GPTs in ChatGPT Enterprise—some with admin-level access, PII exposure, and privilege escalation risks. With rapid mapping, monitoring, and governance controls, they remediated threats and scaled AI adoption without losing visibility or control.

To summarize…

AI security isn’t just about protecting models—it’s about securing the identities behind them. By governing NHIs, you can ensure every AI agent’s actions are authorized, monitored, and safe. With Astrix, you gain the visibility, control, and intelligence needed to embrace AI securely and at scale. Book a demo with our AI Security expert.