How a Major Enterprise Implemented a Control Plane Layer for AI Agents Using NHI Security

A control plane for AI agents is often described as a centralized system for governance, monitoring, and orchestration.

In practice, most organizations hit a more immediate problem:

They don’t know what their AI agents can access.

That’s where control breaks down.

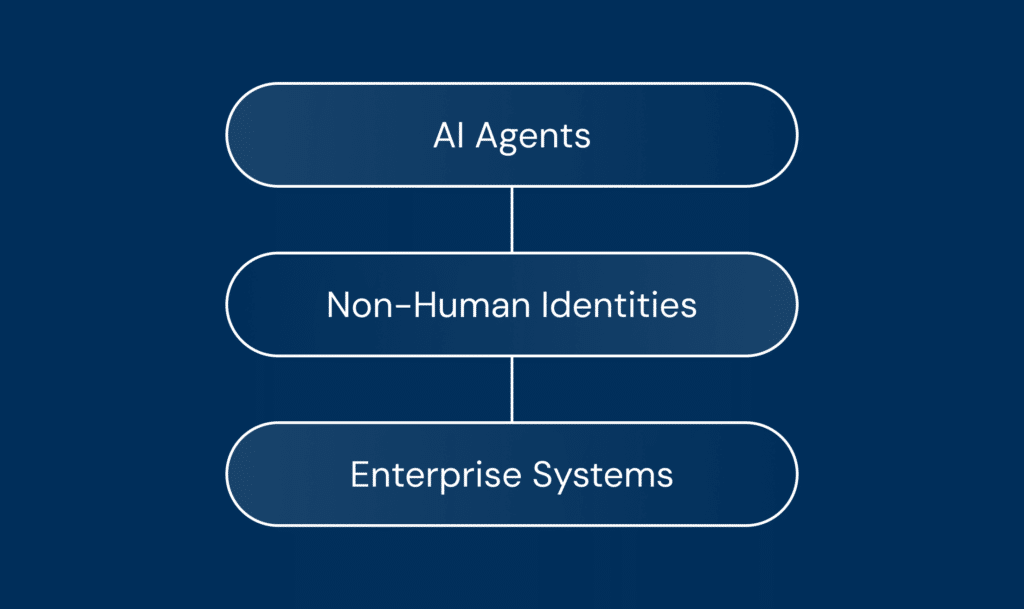

AI agents don’t act directly. They act through non-human identities such as API keys, OAuth tokens, and service accounts. Without visibility and control at this layer, monitoring and policies alone don’t prevent risk.

Most control plane approaches focus on monitoring and orchestration. They don’t address how access is actually controlled.

This case study shows how a major enterprise moved from early AI agent adoption to real control by gaining visibility, ownership, and control over the identities powering their AI agents.

The Problem: AI Agents Without a Control Layer

A long-time Astrix customer, already securing non-human identities (NHIs) across their enterprise, faced a new challenge: governing AI agents developed internally and through ChatGPT.

They piloted three agentic AI platforms:

- Vertex AI

- ChatGPT Enterprise

- Glean

They granted enterprise licenses to 200 developers within a broader engineering organization of thousands.

Within 1.5 months, they built approximately 400 GPTs:

- 240 were published

- the rest remained in draft

At this point, a critical question emerged from the GRC manager and CISO:

“What do these GPTs have access to?”

It was a simple question but one they couldn’t answer.

Despite having governance policies in place, the organization had no visibility into the identities, permissions, and access paths behind these agents. They were already losing control of the environment.

What a Control Plane Is Supposed to Do

A control plane for AI agents is typically described as a system that:

- maintains an inventory of agents

- enforces policies

- monitors activity

- manages lifecycle and orchestration

These capabilities provide coordination and oversight.

But they don’t explain how control is enforced.

Control is not just about observing behavior, it’s about controlling access.

And access is defined at the identity layer.

For a deeper view into how this works in practice, see how Astrix supports AI agent governance and security across enterprise environments.

Where Control Actually Breaks: The Identity Layer

AI agents don’t operate in isolation. They act through non-human identities:

- API keys

- OAuth tokens

- service accounts

- external integrations

These identities define what the agent can access and act on.

Without visibility into these identities:

- ownership is unclear

- permissions accumulate

- access paths remain hidden

- risk grows silently

This isn’t just a monitoring gap, it’s an access control gap.

For a conceptual definition, see non-human identities.

Implementation: How Astrix Enabled Real Control

To address this, Astrix extended its NHI security platform to cover AI agents.

This gave the organization visibility into how agents were actually connected across systems:

- Inventory mapping of GPTs, identities, secrets, and permissions

- Detection of connected systems and shared data sources

- Real-time visibility into access and usage changes

- Policy recommendations to reduce risky configurations

This is part of how Astrix discovers and governs AI agents in real enterprise environments.

Beyond monitoring agents, the organization could now see and control how each agent interacted with data, systems, and credentials.

What Was Discovered: Control Failures in the First Week

Within the first week, Astrix uncovered critical gaps:

- 250+ GPTs active in the environment

- ~25% of agents accessible by any user

- Some agents had direct access to sensitive systems, including production BigQuery tables

- Sensitive files were used as training data in publicly accessible GPTs

- GPTs held admin-level scopes and could interact with platforms like Jira

- 10% had direct API access to external systems such as BigQuery and Atlassian

One case stood out:

A developer had connected a GPT to BigQuery using a credential intended for internal use. This unintentionally exposed PII to ChatGPT.

This was immediately flagged as a high-risk scenario by the GRC manager and CISO.

This wasn’t just a policy issue.

It was an access control failure.

Impact: From Unknown Access to Controlled Systems

With visibility into non-human identities and access paths, the organization was able to:

- Identify and remediate high-risk GPTs

- Restrict access to sensitive systems and data

- Implement enforceable governance controls

- Improve security posture while continuing AI adoption

Access control moved from implicit to enforced.

Most importantly, the organization could scale beyond the initial 200 developers with confidence, avoiding significantly higher risk in full production.

Why This Matters

Without visibility into what AI agents can access, there is no real control plane.

Monitoring, policies, and orchestration are not enough.

In practice, control depends on visibility and enforcement at the identity layer.

If you don’t know what your AI agents can access, you don’t have a control plane.

Schedule a Demo to control what your AI agents can access and see how Astrix makes that visible and controllable.

Want to see how Astrix helps organizations securely scale AI agent adoption without losing governance or control? Learn more about our approach to securing non-human identities and governing AI agents in practice.