Don’t just Discover AI Agents, Understand their Risk

The rapid adoption of agentic AI has changed the security conversation inside organizations. Instead of asking: “Is AI being used?, security leaders now face a more complex and urgent set of questions: What are agents connected to? What permissions do they have? And what risk does this introduce into my environment?

AI agents are no longer isolated experiments owned by individual developers. They are increasingly embedded into core business workflows—connected to code repositories, SaaS platforms, internal data stores, and automation systems. While this connectivity is essential to realizing value, it also introduces new risks that are difficult for security teams to identify, contextualize, and prioritize.

The short video below highlights the value customers gain from the visibility and risk scoring Astrix brings to their AI inventory: AI Agent Risk Engine in Action.

Understanding the New Risk Landscape

As organizations deploy AI agents at scale, several risk patterns are emerging:

- Improperly scoped agents with more consumers allowed to use the agents than intended

- Shadow integrations that bypass formal approval processes

- Overly permissive access to sensitive systems and data

For security teams, the challenge is not a lack of activity. It’s also a lack of context. Teams are expected to define standards, implement governance, and reduce risk while AI environments are still actively being built and iterated on.

To secure and govern AI effectively, organizations need more than surface-level visibility. They need context and a measurable way to understand both exposure and the likelihood of agent misuse.

A Shift to AI Platform Security

The pressure created by the rapid rise of AI agents—and the absence of mature security practices around them—has become increasingly clear over the past year.

More proof-of-value engagements now include AI agent platforms and monitoring capabilities as core evaluation criteria. Organizations are looking for solutions that can deliver immediate insight into existing AI usage, even as their broader AI strategy continues to evolve.

At Astrix, Solutions Engineers are not only helping customers inventory and understand the agents deployed in their environments. They are actively helping reduce risk by identifying and remediating critical findings.

One of the most common use cases we see involves identifying agents with overly broad, organization-wide access and reducing that exposure by descoping permissions or removing the agents entirely. While unsanctioned tool connections remain a concern, teams are often more focused on how easily agents can be triggered to take action. Especially when those agents have deep access to critical systems.

How Astrix Helps Secure Agentic AI

The Astrix Platform is designed to enable customers to discover, monitor, and secure AI agents across their environment—without slowing innovation.

At its core, Astrix delivers:

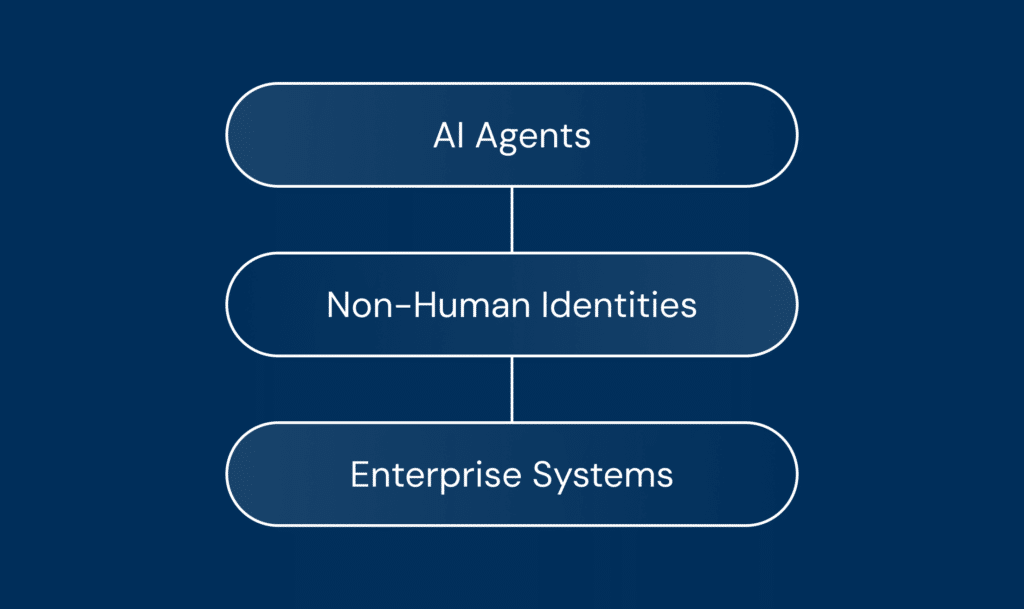

- Comprehensive Inventory of all AI agents, MCP servers, and non-human identities (NHIs)

- Deep contextual insight into how each agent is configured, what it’s connected to, how it behaves and the potential risk it produces

- Detection and remediation of excessive privileges, vulnerable configurations, abnormal activity, and policy violations

However, visibility alone doesn’t solve prioritization. That’s where the Astrix AI Agent Risk Engine comes in.

Astrix’s risk engine automatically assesses each AI agent’s exposure and likelihood of misuse, translating complex configuration and usage data into a clear, actionable risk score.

The engine applies a balanced rule model:

- Negative rules elevate risk when dangerous conditions are detected

- Positive rules reduce risk when strong security controls and best practices are in place

The result is an immediate, accurate view of each agent’s security posture—without manual analysis or guesswork.

Impact for Customers

By contextualizing and quantifying risk, security teams can move from reactive firefighting to proactive control. With Astrix, teams can:

- Prioritize high-risk agents before they become incidents

- Approve agents with confidence knowing risk is continuously evaluated

- Automatically revoke approvals if an agent’s risk level increases