Beyond the Prompt: Gartner Recognizes Astrix in New Report on the Future of AI Agent Security

Gartner published a new emerging tech research, “Emerging Tech: The Future of AI Security Is in Securing Agent Actions, Not Prompts,” authored by Mark Wah and David Senf.

The report makes a pointed argument: the AI security market is focused on the wrong layer. Prompt filtering was built for chat-based AI, not for autonomous agents that take actions, use tools, and operate across enterprise systems without human oversight. The new reality requires a fundamentally different security model, one grounded in identity, access, and behavioral control at runtime. Astrix is cited as a sample vendor in this critical shift toward behavioral and action-based AI agent security.

TL;DR

- In this new research note, Gartner argues the AI security market is solving the wrong problem. Prompt filtering addresses chat-based AI; it does not address autonomous agents that take actions, call tools, and interact with enterprise systems without human oversight.

- Gartner draws a hard line between securing nonagentic AI (prompt guardrails) and securing AI agents (behavioral and action security). The risks are different, the mechanisms are different, and the controls must reflect that.

- Astrix is cited as a representative vendor in the AI agent security category.

- Key prediction: over 50% of successful attacks against AI agents through 2029 will exploit access control issues.

- Gartner’s practical guidance for security leaders:

- Prioritize agent discovery and MCP security in the near term,

- Enforce real-time action authorization within the agent execution loop, and

- Establish IAM for AI agents as the foundation before scaling agentic AI deployments

What the Gartner report covers

The central argument of this research is that AI security can no longer focus exclusively on what a model says; it must govern what an agent does.

Agents are stateful. They reason across multiple steps, use tools, write to systems, and communicate with other agents, often without a prompt interface at all. A text classifier evaluated in isolation cannot detect tool misuse, logic drift, unauthorized resource access, or credential abuse. Gartner argues that the entire control model needs to move into the agent’s cognitive and execution loop.

Gartner makes the distinction explicit. Securing nonagentic AI is a text problem: inspect prompts, filter toxic content, catch data leakage in natural language. The controls are stateless, evaluated prompt by prompt, with no memory of what came before. Securing AI agents is an access and behavior problem:

- The primary risks are tool misuse, logic and intent drift, unauthorized resource consumption, and authentication failures.

- Visibility must extend across agent-to-agent, agent-to-tools, and machine-to-machine interactions, not just user-to-LLM.

- Controls require API hooking, IAM integrations, and runtime enforcement, not text classifiers.

- Agent behavior spans multiple steps, tools, and systems, where each action builds on the last.

This distinction matters for anyone evaluating AI security vendors.

Why This Matters for the Enterprise

The move toward autonomous multi-agent systems introduces risks that traditional controls cannot mitigate, such as behavioral drift, tool misuse, and goal hijacking.

Gartner’s strategic predictions underscore the urgency for security leaders:

- Through 2029, over 50% of successful attacks against AI agents will exploit access control issues.

- By 2029, over 25% of enterprises will adopt “guardian agents”, independent supervisory entities, to monitor and block rogue behaviors at scale.

What Gartner recommends

Near term priority: Gartner’s recommended near-term priority is visibility and ecosystem security: agent discovery, MCP security, misconfiguration detection, and excessive permissions. The finding is straightforward: organizations cannot govern agents they cannot see.

Post-visibility priority: From there, Gartner recommends enforcing behavioral control and real-time action authorization within the agent’s execution loop, with in-line security capabilities that can block destructive operations before execution. IAM for agents is framed as the mandatory foundation, with failure to define and manage agent identity and access cited as a key blocker to enterprise-scale deployment.

How Astrix aligns with the Gartner perspective

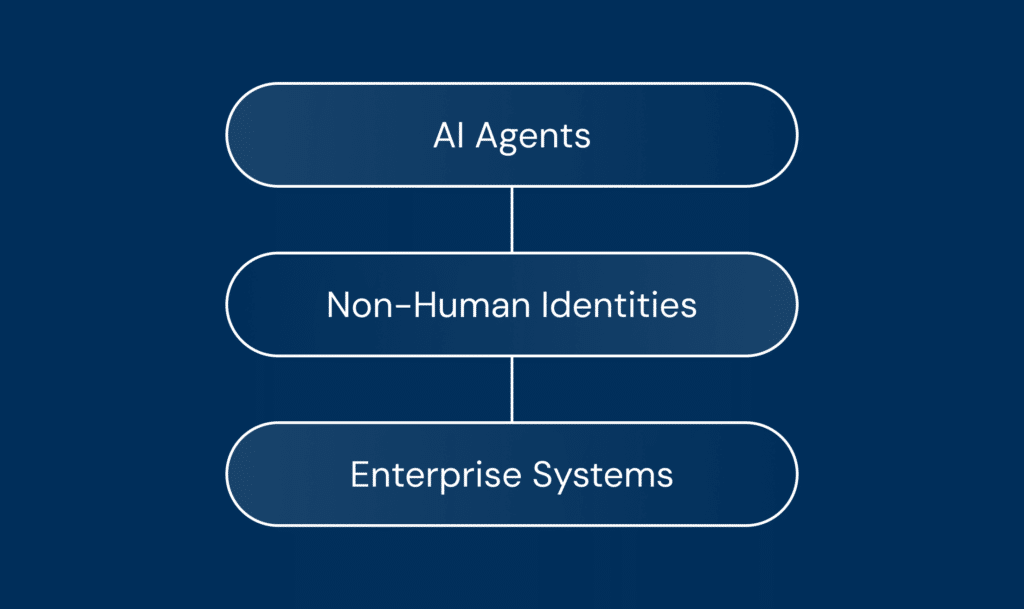

The report’s recommendations fully align with the Astrix mission: Astrix secures AI agents and the non-human identities that connect them to critical systems. Our platform enables AI agent, MCP server, and NHI discovery, threat detection, access governance, and controlled deployment for safe, enterprise-scale AI agent adoption. Gartner places Astrix in the AI agent security category focused on behavioral and execution-layer controls.

Astrix aligns with this vision through three core pillars:

- Discovery First: You cannot govern agents you cannot see. Astrix continuously discovers AI agents, non-human identities, MCP servers, secrets, and shadow AI.

- Secure the Non-Human Identity Layer: An agent’s blast radius is defined by the identities and permissions it holds. Securing AI agents requires securing the NHIs they operate through.

- Enforce at Runtime: Security must operate inside the agent’s cognitive and execution loop. Astrix ensures context-led visibility into AI agents, MCP servers, and NHIs, detects anomalous behavior, enables policy-based access control, and reduces excessive permissions before actions turn into incidents.

Being recognized by Gartner in this report validates our commitment to providing a clean actionable framework for agentic AI security.

Ready to see how Astrix helps your enterprise discover, secure, and deploy AI agents responsibly? Book a demo today.

Gartner, Emerging Tech: The Future of AI Security Is in Securing Agent Actions, Not Prompts, Mark Wah, David Senf, 20 February 2026.

GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and is used herein with permission. All rights reserved.

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.