MCP’s First Year: The Missing Security Pieces Are Finally Falling Into Place (Part 2)

In Part 1 of this series, we examined how the Model Context Protocol (MCP) matured over its first year by closing critical security and authorization gaps that limited enterprise adoption. With those foundations in place, a new set of challenges came into focus — not around who agents are allowed to act as, but how they actually use tools and knowledge at scale.

As MCP adoption surged, it became clear that simply exposing more tools to agents wasn’t enough. Agents began to struggle with context overload, inefficient tool selection, and a lack of shared organizational knowledge. In response, Anthropic has been refining how Claude discovers tools and learns enterprise-specific context — introducing advanced tool discovery mechanisms and a new concept called Agent Skills.

This second part dives into those developments, exploring how smarter tool use and skill discovery are shaping the next phase of enterprise-grade AI agents.

Claude Advanced Tool Use & Skills

MCP has been out for a year. And what a year it was – the protocol has gained traction beyond what anyone could have guessed, to the point where there are thousands of different MCP servers indexed in various marketplaces (and even we made a sampling-based estimation, where we reached the crazy number of 20,000 MCP server implementations).

It is clear that this crazy adoption has paved the way for AI agents to reach their full potential – getting relevant context based on internal enterprise data, and making actions on your behalf, without even needing to look outside of your chat interface.

Throughout the year, however, different issues came to light with how AI agents use these tools: while providing available tools to the agent using natural language is great to adapt an API aimed for code into something LLM system can easily understand, it causes an explosion in context usage.

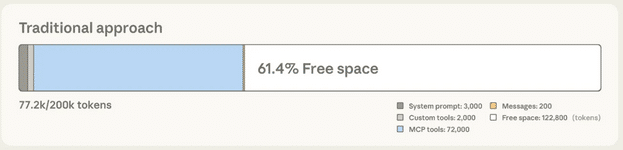

In a simple experiment conducted by Anthropic to showcase the problem, they connected Claude with 5 MCP servers, commonly used by a developer-assistant agent: GitHub, Slack, Sentry, Grafana, and Splunk.

In total, these servers expose 58 tools, totaling approximately 55,000 tokens. These are all consumed even before the conversation starts, and include every detail about these tools – their definition, parameters, and expected response.

That’s…a lot. It causes agents to get overwhelmed and consequently choose the wrong tool or make mistakes in invocation parameters. Usually, the agent can overcome this by inspecting errors in the tool’s response, but all of this back-and-forth requires even more context.

A solution to this problem is in order, and Anthropic has come up with a brilliant one – a unique tool dedicated to discovering other tools. (Yo dawg).

Using a new field in the MCP tools/list invocation, defer_loading, tells MCP clients not to load these tool definitions immediately. Instead, when the agent decides a tool call might be needed, it invokes the tool, which searches for relevant tools based on regular expressions and the best matching string algorithm. Only the top matching tools (if any) will be loaded into the agent’s context.

This way, the agent avoids polluting its context with irrelevant tools and circumvents the issue entirely since the agent only sees the relevant tools for its current task.

Currently, tool calling is available in beta when building agents using the Claude console (the API version of Claude), but I foresee this reaching mainstream agents pretty quickly.

Agent Skills

Continuing on a similar note, experience with using agents had surfaced another issue: working with enterprise agents revealed a real gap in agents’ unfamiliarity with knowledge that is common across the enterprise and organizational context.

In many instances, organizations have been using rules files and enlarged system prompts that include this knowledge, but this isn’t scalable.

A new, interesting solution by Anthropic is to create Agent Skills – a file-based approach that allows the agent to progressively discover skills relevant to its current task.

To utilize skills, you create a skills directory, where each subdirectory contains the relevant knowledge associated with a specific skill. The main file is SKILL.md, whose short description is always loaded into the agent’s context and describes what the skill provides. The agent can then decide when to load the remaining skills.

For example, you can have a skill on guidelines for how to apply the company brand. Beyond the general description, the main skill file contains the company name, its color palette, typography, etc.

The skill further provides some useful scripts, for instance, applying the brand to specific file formats like PowerPoint, Excel, PDF, etc. The agent can decide whether to load those scripts if they’re relevant, and skip adding these to the context otherwise.

Skills are a strong format for the procedural discovery of organizational context known by agent users, but not necessarily by agents without significant effort. With skills, you make the effort once, and from then on, the agent can manage its own knowledge, which pertains to the task at hand. Fortunately for the community, Anthropic has released Agent Skills as an open standard, allowing for easy operability across different agentic infrastructures.

Summary

Reflecting on the past year, marked by the incredible pace of agentic AI adoption, many new issues have surfaced, some of which we couldn’t even have imagined a year ago.

Luckily, recent advances in the MCP specification and new features for agents, such as skills and tool discovery, are working to mitigate these issues and pave the way for a hopeful future of embedding AI agents in every employee’s daily work across organizations.